Point it at a URL or a local path — no custom agent loop, sandbox, or tool runtime to build yourself. The repository ships the agent definition in two interchangeable formats:

botvisibility-agent.yaml— primary, human-edited sourcebotvisibility-agent.json— JSON mirror of the same spec

Upload either file to Anthropic’s Managed Agents API and you have a deployable auditor.

What it does

AI agents (ChatGPT, Claude, Copilot, custom GPTs, MCP clients) need machine-readable metadata to discover and interact with your site. Most sites ship with none of it. BotVisibility Checker is the diagnostic that tells you exactly what’s missing — and how much it matters.

- Audit a live URL with a two-round fetch budget.

- Evaluate all 55 checklist items from the data collected.

- Score Discoverable, Usable, Optimized, and Agent-Native independently.

- Prioritize fixes by High / Medium / Low severity with realistic effort estimates.

Give it a URL — it audits the live site. Give it a local repo path — it runs the BotVisibility CLI and supplements with manual checks. Paste raw HTML — it audits statically.

The 55-item checklist

BotVisibility tests against 5 levels:

| Level | Name | Checks | What it means |

|---|---|---|---|

| 1 | Discoverable | 18 | Bots can find you. Metadata, machine-readable files, and structured data are in place. |

| 2 | Usable | 11 | Your API works for agents. Auth, errors, and core operations are agent-compatible. |

| 3 | Optimized | 7 | Agents work efficiently. Pagination, filtering, and caching reduce token waste. |

| 4 | Indexable | 12 | AI search systems can index and ground answers in your site. Crawl access, structured data, and substantive content are in place. |

| 5 | Agent-Native | 7 | First-class agent support. Intent endpoints, sessions, scoped tokens, and tool schemas. |

Levels 1–4 are scored progressively. Level 5 (Agent-Native) is scored independently — a site is never penalized for not enabling everything. Items 5.1–5.7 are CLI-only and reported in a separate section because they require authenticated API access.

Two-round fetch discipline

The agent operates under a strict tool budget to keep audits fast, cheap, and deterministic:

- Round 1 — four parallel fetches: homepage HTML, HTTP response headers,

robots.txt, andsitemap.xml. - Round 2 — up to four follow-up fetches for files referenced in Round 1 (e.g.

llms.txt,ai-plugin.json, a feed URL). - No Round 3. After Round 2 the agent goes silent and writes the full report. Insufficient data for a check → marked N/A.

This prevents runaway tool loops and makes every audit cost-predictable.

Silent execution

The agent emits nothing to the user between the session-start greeting and the final report — no “fetching now”, no progress updates, no status chatter. You get one message back: the full report.

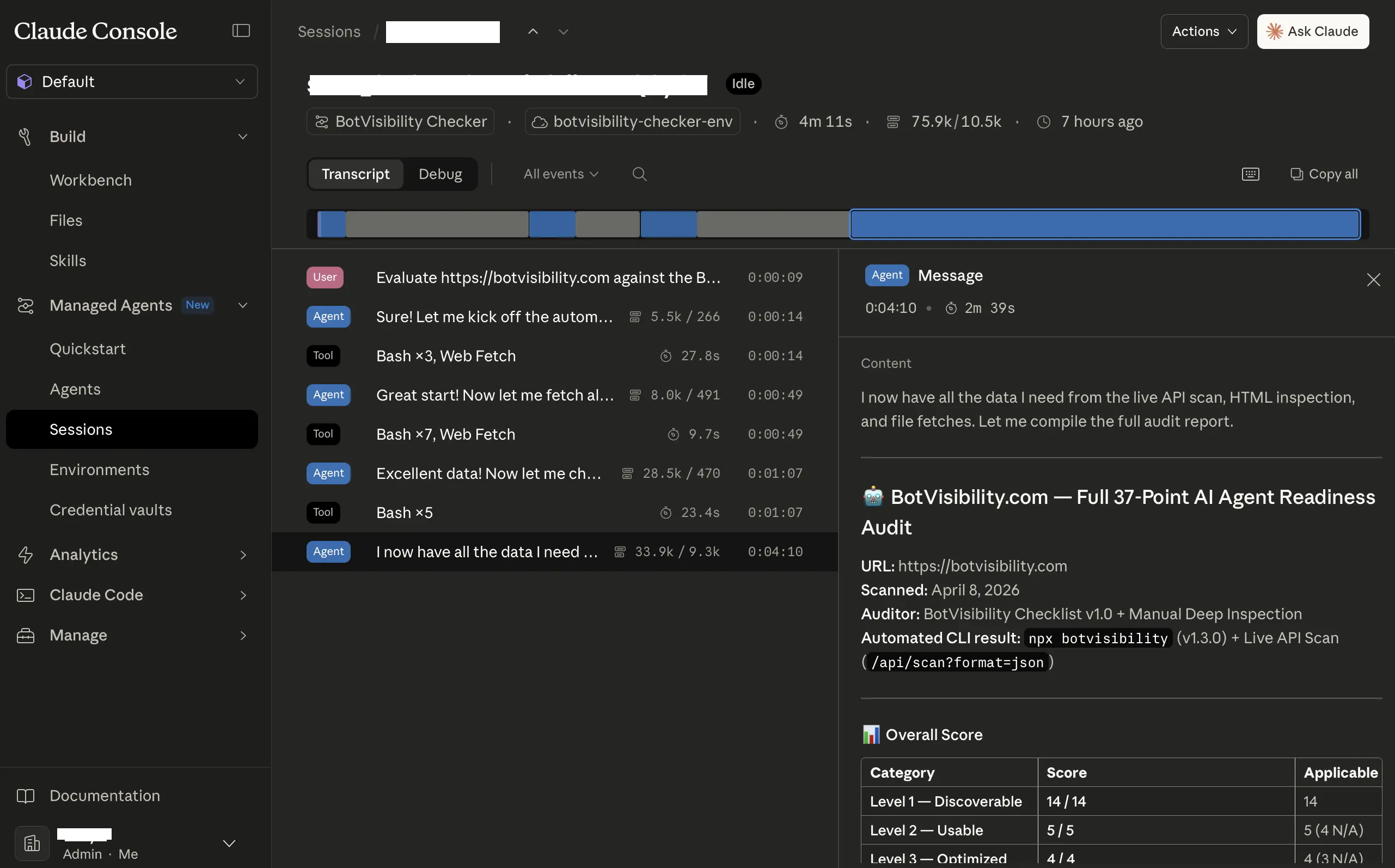

Structured report output

Every audit produces a 7-section report with a fixed schema, suitable for piping into dashboards or downstream tooling:

| Section | Content |

|---|---|

| 1. Input & Scope | Interpreted input, resources fetched, access limitations |

| 2. Overall Score | X / 37 (excludes N/A from denominator) |

| 3. Category Scores | Per-level scores in a 4-row table |

| 4. Findings Table | One row per check: #, Item, Status, Severity, Finding |

| 5. CLI-Only Items | Items 4.1–4.7 listed separately |

| 6. Prioritized Recommendations | High → Medium → Low, each with fix / rationale / effort |

| 7. Limitations | Items that couldn’t be fully evaluated and what access is needed |

Status values: PASS, FAIL, PARTIAL, N/A. Severity (for FAIL/PARTIAL only): High (blocks agent discovery/usability), Medium (degrades agent UX), Low (best-practice gap). Effort estimates: Quick Win (< 1 hr), Moderate (half-day), Complex (multi-day).

Dual input modes

| Input | Behavior |

|---|---|

Full URL (https://example.com) | Fetches the live site; does not run the CLI. |

Partial domain (example.com) | Prepends https:// and notes the assumption. |

Local repo path (/path/to/project) | Runs npx botvisibility <path> via bash, then supplements with manual checks. |

| Raw HTML pasted inline | Audits statically; notes that server-side signals can’t be evaluated. |

| Unclear input | Asks for clarification before proceeding. |

Graceful failure handling

- Any fetch error → affected items marked N/A, audit continues.

- Bot block / CAPTCHA → flagged as its own checklist signal, fetch-dependent items marked N/A.

npx botvisibilityfailure → falls back to fully manual evaluation and notes it in the report.

Built on the Managed Agents toolset

The agent uses the full agent_toolset_20260401 built-in toolset — bash, read, write, edit, glob, grep, web_fetch, web_search — with permission_policy: always_allow so audits run unattended. web_fetch handles homepage/robots/sitemap/Round 2; bash + curl -sI handles the headers check (since web_fetch doesn’t expose raw response headers).

Deployment

This is an agent definition, not a runtime application. To use it, you register it with Anthropic’s Managed Agents API and launch sessions against it.

Requirements

- A Claude API key

- Access to Claude Managed Agents (enabled by default for all API accounts)

- The beta header

anthropic-beta: managed-agents-2026-04-01on every Managed Agents request

Register the agent

curl -fsSL https://api.anthropic.com/v1/agents \ -H "x-api-key: $ANTHROPIC_API_KEY" \ -H "anthropic-version: 2023-06-01" \ -H "anthropic-beta: managed-agents-2026-04-01" \ -H "content-type: application/json" \ -d @botvisibility-agent.json

The response includes an agent ID you’ll pass when starting sessions.

Environment prerequisites

Your Managed Agents Environment must have:

- Node.js + npm on PATH. Local-repo audits run

npx botvisibility <path>, which requires Node. - Outbound network access to arbitrary user-supplied hostnames (for live-site audits) and to the npm registry (so

npx botvisibilitycan resolve on first run). If the environment’s network policy is deny-by-default, both must be allowlisted. - No mounted files required — local-repo audits assume the repo is present in the container working directory when the session starts.

Start a session

Create a Session that references the agent ID and your Environment, then send a user event with either a URL or a local path. The agent replies with its greeting, waits for input, and returns the full report after Round 2.

How scoring works

Levels 1–4 (progressive):

- Level 1 achieved: 50%+ of L1 checks passing

- Level 2 achieved: (L1 ≥ 50% AND L2 ≥ 50%) OR (L1 ≥ 35% AND L2 ≥ 75%)

- Level 3 achieved: (L2 achieved AND L3 ≥ 50%) OR (L2 ≥ 35% AND L3 ≥ 75%)

- Level 4 achieved: (L3 achieved AND L4 ≥ 50%) OR (L3 ≥ 35% AND L4 ≥ 75%)

Level 5 (CLI-only):

- Agent-Native checks (5.1–5.7) aren’t scored from web scans

- Require source code introspection via

npx botvisibility scan --repo . - Unlock 7 deeper checks for intent endpoints, agent sessions, scoped tokens, and audit logs

Checks return PASS, PARTIAL, FAIL, or N/A. N/A checks are excluded from scoring denominators.

Customization

Both spec files are intentionally simple and hand-editable. The most common customizations:

- Change the model. Swap

claude-sonnet-4-6for another model ID in themodel.idfield. - Tighten the tool surface. Add a

configsarray to disable tools you don’t need (e.g.web_search):

tools:

- type: agent_toolset_20260401

default_config:

enabled: true

permission_policy:

type: always_allow

configs:

- name: web_search

enabled: false- Edit the system prompt. The

system:block is the operating contract. When modifying it, preserve the two-round fetch budget, the silent-execution rule, the input-routing logic, the 55-item structure (18/11/7/7), and the 7-section report schema unless you intentionally want to change them. - Keep YAML and JSON in sync. The two files must stay semantically identical — edit both in the same commit and diff them after.